How Flickr works with Thorn to Detect Harmful Content

February 17, 2023

3 Minute Read

When it comes to keeping platforms safe and free of child sexual abuse material (CSAM), we know that all platforms must play a role in detecting, removing, and reporting this harmful content. That’s the only way we can end the viral spread of abuse and build a safer internet for everyone – especially children.

Flickr, the image and video hosting site, is partnering with Thorn to do just that. The company is on a mission to protect their millions of users – despite the massive amount of content that is uploaded there each day.

To do so, Flickr uses Safer – Thorn’s technology that is designed to help platforms detect, review, and report CSAM at scale.

The Challenge

Despite the huge volume of content uploaded to Flickr daily, the company’s Trust and Safety team has always been deeply committed to keeping its platform safe.

The core technology used in CSAM detection is hashing and matching–which essentially takes an image’s “digital fingerprint” and looks for a match among known CSAM images. The inherent challenge with this method, however, is that it doesn’t help to find new or previously unknown CSAM, which was a priority for Flickr.

In order to detect novel CSAM, they knew they needed to use artificial intelligence.

The Solution

In 2019, Thorn’s data science team began developing a CSAM Image Classifier – a machine learning classification model that predicts the likelihood that a file contains imagery of child sexual abuse. This technology was added to Thorn’s Safer product in 2020, and Flickr, a Safer customer since 2019, was one of the first adopters of this new tool.

Flickr’s Trust and Safety team incorporated the technology to detect images that probably would not have been discovered otherwise.

The Results

It didn’t take long for Flickr to see the immense benefits of the CSAM Image Classifier. One recent Classifier hit led to the discovery of 2,000 previously unknown images of CSAM. Once reported to the National Center for Missing and Exploited Children (NCMEC), an investigation by law enforcement was initiated.

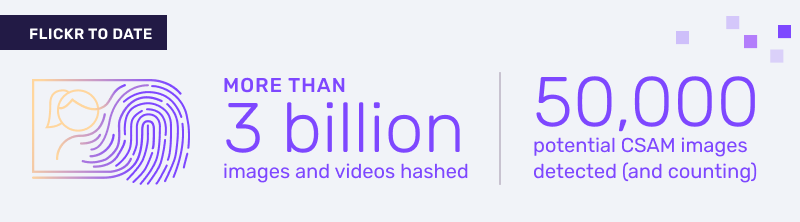

To date, Flickr – which has a team of less than 10 full-time Trust and Safety employees – has hashed more than 3 billion images and videos and detected 50,000 potential CSAM images (and counting).

This all translates into more reports being sent to NCMEC, more investigations, and a bigger difference in the lives of child victims.

This all translates into more reports being sent to NCMEC, more investigations, and a bigger difference in the lives of child victims.

“[Safer and the CSAM Image Classifier] take a huge weight off the shoulders of our content moderators, who are able to proactively pursue and prioritize harmful content,” said Jace Pomales, Trust and Safety Manager at Flickr.