Technical Innovation: How Thorn uses technology to transform child protection with a safer internet

July 8, 2025

6 Minute Read

Child sexual abuse and exploitation represent the most urgent child safety challenges of our digital age. From grooming and sextortion to the production and sharing of child sexual abuse material (CSAM), these interrelated threats create complex harms for children worldwide. Behind each instance of exploitation is a child experiencing trauma that can have lasting impacts. As technology evolves, so do the methods perpetrators use to exploit children—creating an environment where protection efforts must constantly adapt and scale.

The challenge is immense:

- In 2024 alone, more than 100 files of child sexual abuse material were reported each minute.

- In 2023, 812 reports of sexual extortion were submitted on average per week to NCMEC.

- NCMEC saw a 192% increase in online enticement reports between 2023 and 2024.

These numbers show abuse material represents just one facet of a broader landscape of abuse. Children face grooming, sextortion, deepfakes, and other forms of harmful exploitation. When these threats go undetected, children remain vulnerable to ongoing exploitation, and perpetrators continue operating with impunity.

Technical innovation: A core pillar of Thorn’s strategy

Technical innovation represents one of Thorn’s four pillars of child safety, serving as the technological foundation that enables all our child protection tools. By developing cutting-edge solutions through an iterative problem-solution process, we build scalable technologies that integrate with and enhance our other strategic pillars:

- Our Research and Insights give us early visibility into emerging threats, so we can rapidly provide a technology response.

- Our Child Victim Identification tools help investigators more quickly find children who are being sexually abused, protecting children from active abuse.

- Our Platform Safety solutions enable tech platforms to detect and prevent exploitation at scale.

This comprehensive approach ensures that our technical innovations don’t exist in isolation but work in concert with our other initiatives to create a robust safety net for children online.

A powerful example of our research & insights translating into technical innovation is the development of Scene-Sensitive Video Hashing (SSVH). Thorn identified that video-based CSAM was becoming an increasingly prevalent and sophisticated form of abuse material. Existing detection tools primarily focused on addressing image material effectively, representing a critical gap in the child safety ecosystem. In response, our technical innovation team developed one of the first video hashing and matching algorithms tailored specifically for CSAM detection. SSVH uses perceptual hashing to identify visually unique scenes within videos, allowing our CSAM Image Classifier to score the likelihood of each scene containing abuse material. The collection of CSAM scene hashes make up the video’s hash. This breakthrough technology has since been deployed through our Platform Safety tools since 2020.

The technology behind child protection

As you can imagine, the sheer volume of child sexual abuse material and exploitative messages far outweighs what human moderators could ever review. So, how do we solve this problem? By developing technologies that serve as a force for good:

- Advanced CSAM detection systems

Our machine learning classifiers can find new and unknown abuse images and videos. Our hashing and matching solutions can find known image and video CSAM. These technologies are used to prioritize and triage abuse material, which can accelerate the work to identify children currently being abused and combat revictimization. - Text-based exploitation detection

Beyond images and videos, our technology identifies text conversations related to CSAM, sextortion, and other sexual harms against children. Detecting these harmful conversations creates opportunities for early intervention before exploitation escalates. - Emerging threat prevention

Our technical teams develop forward-looking solutions to address new challenges, including AI-generated CSAM, evolving grooming tactics, and sextortion schemes that target children.

What is a classifier exactly?

Classifiers are algorithms that use machine learning to sort data into categories automatically.

For example, when an email goes to your spam folder, there’s a classifier at work.

It has been trained on data to determine which emails are most likely to be spam and which are not. As it is fed more of those emails, and users continue to tell it if it is right or wrong, it gets better and better at sorting them. The power these classifiers unlock is the ability to label new data by using what it has learned from historical data — in this case to predict whether new emails are likely to be spam.

Thorn’s machine learning classification can find new or unknown CSAM in both images and videos, as well as text-based child sexual exploitation (CSE).

These technologies are then deployed in our Child Victim Identification and Platform Safety tools to protect children at scale. This makes them a powerful piece of the digital safety net that protects children from sexual abuse and exploitation.

Here’s how different partners across the child protection ecosystem use this technology:

- Law enforcement can identify victims faster as the classifier elevates unknown CSAM images and videos during investigations.

- Technology platforms can expand detection capabilities and scale the discovery of previously unseen or unreported CSAM. They can also detect text conversations that indicate suspected imminent or ongoing child sexual abuse.

What is hashing and matching?

Hashing and matching represents one of the most foundational and impactful technologies in child protection. At its core, hashing converts known CSAM into a unique digital fingerprint—a string of numbers generated through an algorithm. These hashes are then compared against comprehensive databases of known CSAM without ever exposing the actual content to human reviewers. When our systems detect a match, the harmful material can be immediately flagged for removal.

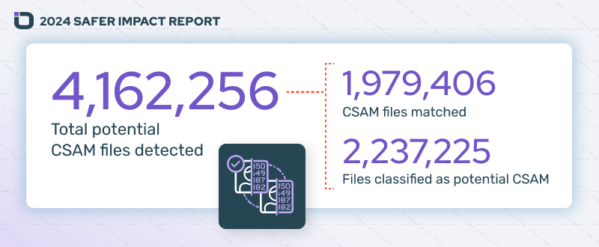

Through our Safer product, we’ve deployed a large database of verified hashes—76.6 million and growing—enabling our customers to cast a wide net for detection. In 2024 alone, we processed over 112.3 billion images and videos, helping customers identify 4,162,256 files of suspected CSAM to remove from circulation.

How does child safety technology help?

New CSAM may depict a child who is actively being abused. Perpetrators groom and sextort children in real time via conversation. Utilizing classifiers can help to significantly reduce the time it takes to find a victim and remove them from harm, and hashing and matching algorithms can be used to flag known material for removal to prevent revictimization.

However, finding these image, video and text indicators of imminent and ongoing child sexual abuse and revictimization often relies on manual processes that place the burden on human reviewers or user reports. To put it in perspective, you would need a team of hundreds of people with limitless hours to achieve what a classifier can do through automation.

Just like the technology we all use, the tools perpetrators deploy changes and evolves. Thorn’s technical innovation is informed by our research and insights, which helps us respond to new and emerging threats like grooming, sextortion, and AI-generated CSAM.

A Flickr Success Story

Popular image and video hosting site Flickr uses Thorn’s CSAM Classifier to help their reviewers sort through the mountain of new content that gets uploaded to their site every day.

As Flickr’s Trust and Safety Manager, Jace Pomales, summarized it, “We don’t have a million bodies to throw at this problem, so having the right tooling is really important to us.”

One recent classifier hit led to the discovery of 2,000 previously unknown images of CSAM. Once reported to NCMEC, law enforcement conducted an investigation, and a child was rescued from active abuse. That’s the power of this life-changing technology.

Technology must be a force for good if we are to stay ahead of the threats children face in a digital world. Our products embrace cutting-edge technology to transform how children are protected from sexual abuse and exploitation. It’s because of our generous supporters and donors that our work is possible.

If you work in the technology industry and are interested in utilizing Safer and the CSAM Classifier for your online platform, please contact info@safer.io. If you work in law enforcement, you can contact info@thorn.org or fill out this application.