Today, the internet is Safer

March 30, 2026

5 Minute Read

At Thorn, two of our core mission pillars are building innovative technology and platform safety. Key to these outcomes is our child sexual abuse material (CSAM) and exploitation (CSE) detection solution, Safer. This versatile product allows tech applications to find and report CSAM and text-based harms on their platforms. In 2025, we had more companies than ever deploy Safer on their platforms. This widespread commitment to child safety is key to building a safer internet and using technology as a force for good.

Safer’s 2025 Impact

Even though Safer’s customer community spans a wide range of industries, all of them host user-generated content or generative AI features.

Safer empowers their teams to detect, review, and report CSAM and text-based CSE at scale. The scope of this detection is critical. It means their content moderators and trust and safety teams can detect CSAM among the millions of content files uploaded and flag potential exploitation across millions of messages shared. This efficiency saves time and speeds up their efforts. Just as importantly, Safer allows teams to report CSAM or instances of online enticement to central reporting agencies, like the National Center for Missing & Exploited Children (NCMEC), which is critical for child victim identification.

Safer’s customers rely on our predictive artificial intelligence and a comprehensive hash database to help them find CSAM and potential exploitation. With their help, we’re making strides toward reducing online sexual harms against children and creating a safer internet.

Total files processed

In 2025, Safer processed 415.4 billion files input by our customers. Today, the Safer community comprises more than 80 platforms, with millions of users sharing an incredible amount of content daily. This provides a strong foundation for the important work of preventing the repeated and viral sharing of CSAM online.

To further extend the impact of our detection solutions, we partnered with Hive to offer Safer to their customers via an integration. Safer’s 2025 impact through the Hive integration includes nearly 4.3 billion files processed for CSAM detection.

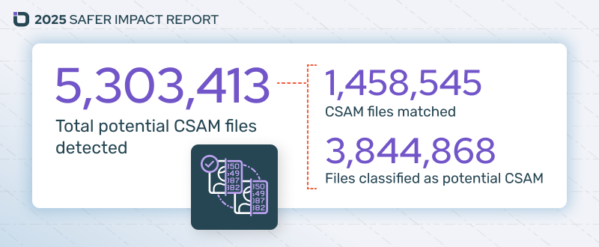

Total potential CSAM files detected

Safer detected nearly 1.5 million images and videos containing known CSAM in 2025. This means Safer matched the files to hash values of known CSAM verified by trusted partners. A hash is like a digital fingerprint, and using it allows Safer to programmatically determine whether the file has previously been verified as CSAM by NCMEC or other NGOs.

Last year, we substantially strengthened Safer’s detection capabilities with a massive expansion of hash data. The import included:

- 1.6 million new SaferHash (image) hashes, bringing our total to 6.3 million

- 50 million new SSVH (video) hashes, bringing our total to 64 million

Maintaining the most current and comprehensive data is essential and ensures our customers have access to robust image and video detection.

In addition to detecting known CSAM, our predictive AI detected more than 3,840,000 files of potential novel CSAM. Safer’s image and video classifiers use machine learning to predict whether new content is likely to be CSAM and flag it for further review. Identifying and verifying novel CSAM enables its addition to the hash library, enabling future detection.

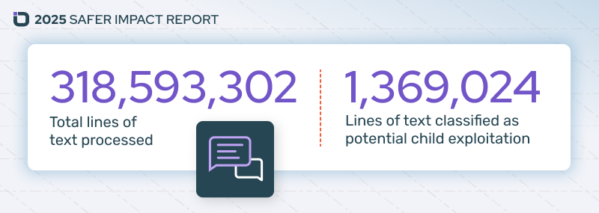

Total lines of text processed

Safer launched a text classifier feature in 2024 and, in 2025 alone, processed more than 318,590,000 lines of text. This capability offers a whole new dimension of detection, helping platforms identify sextortion and other abuse behaviors happening via text or messaging features. In all, more than 1.3 million lines of potential child exploitation were identified, helping content moderators respond to potentially threatening behavior.

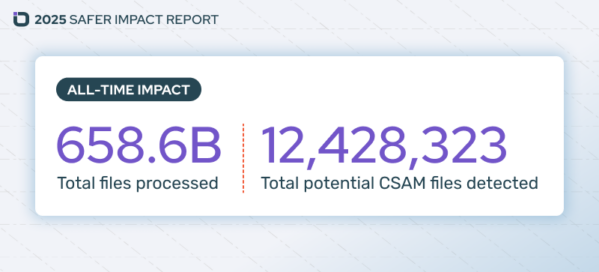

Safer’s all-time impact

Safer’s impact continues to grow exponentially, with the community almost tripling the all-time total files processed in a single year. Since 2019, Safer has processed 658.6 billion files and 334 million lines of text, resulting in the detection of more than 12.4 million potential CSAM files and nearly 1.4 million instances of potential child exploitation. Every file processed and every potential match made helps create a safer internet for children and content platform users.

Build a Safer internet

Curtailing platform misuse and addressing online sexual harms against children requires an “all-hands” approach. Many trust and safety teams are being asked to do more with fewer and fewer resources. Thorn is here to help content moderators with a powerful, multi-layered tool that provides robust detection solutions for technology teams. Together, we can transform how kids are protected from sexual abuse and exploitation in the digital age.